We have all been there, onboarding to a new codebase with an outdated doc, running into hurdle after hurdle and finally ending up with a jank setup that barely works - until you run a system update...😭. devcontainers attempts to address this problem by combining reproducible and isolated Docker containers with the powerful Visual Studio Code editor (VSCode) to achieve a cohesive experience where we develop inside a container.

Why adopt devcontainers?

- A team can share and maintain a dev environment as code

- Low setup cost of dev environments on new machines, which makes for easy onboarding of new contributors

- No conflicts with existing dev environments on the same machine, i.e. no more managing multiple Node versions

How does this work?

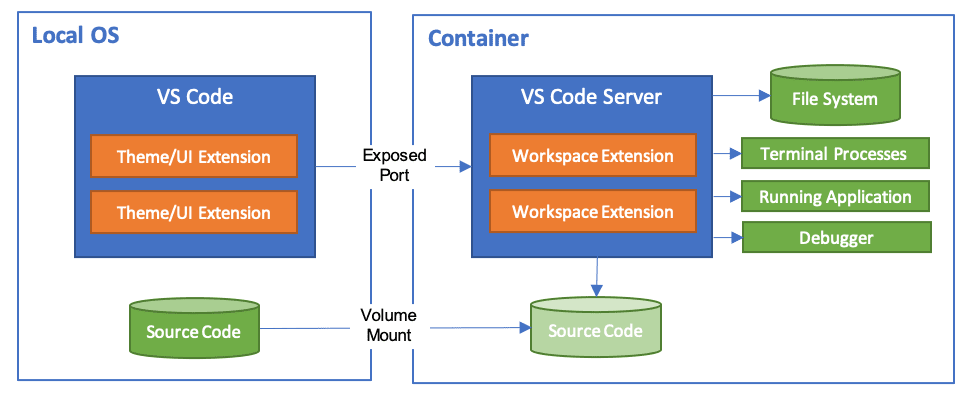

VSCode has the capability to develop in a number of remote environments1. This involves VSCode client on the local host connecting to VSCode server running on the remote host. The remote host is configured with everything needed to support development, e.g. os, tools, configuration. Docker2 is one of the supported remote environments.

Setting up a devcontainer

We will setup a simple JavaScript devcontainer environment.

A devcontainer environment is configured within the .devcontainer directory, which includes a Dockerfile, docker-compose.yml and a devcontainer.json. At a high level, the Dockerfile defines the main container where runtimes and tools can be installed; the docker-compose.yml defines how to run the Dockerfile; the devcontainer.json links the docker-compose.yml to VSCode and manages the extensions and settings to be installed.

Dockerfile

FROM mcr.microsoft.com/vscode/devcontainers/javascript-node:0-14-bullseye

RUN apt-get update \

&& apt-get install --no-install-recommends -y \

vim \

tmux

There are a series of base images provided by the VSCode team that we can extend[^devcontainer-base-images] - these typically install some base tooling for the language in a Linux distro like Debian.

For example, the javascript-node image3 includes includes NodeJS, yarn, nvm and some Unix tools like zsh, zip, curl, git etc. Additional tooling can be installed by extending the base image in a Dockerfile.

docker-compose.yml

version: "3.8"

services:

main:

build:

context: .

dockerfile: Dockerfile

volumes:

- ..:/workspace:cached

command: sleep infinity

In accordance to the reference docker-compose.yml4, we define a main service that represents the container we will develop inside. It is configured to build the previously defined Dockerfile, mount the project directory (../) as a volume in the container at /workspace and run indefinitely with sleep infinity.

devcontainer.json

{

"name": "javascript-devcontainer",

"dockerComposeFile": "docker-compose.yml",

"service": "main",

"workspaceFolder": "/workspace",

"settings": {

"terminal.integrated.shell.*": "zsh"

},

"extensions": ["dbaeumer.vscode-eslint", "github.copilot"]

}

We provide the entrypoint to the devcontainer as the main service in the docker-compose.yml. We can defined VSCode settings and extensions 5 to be installed in the container. In this example, we are setting the default terminal to zsh and install an eslint integration6 and GitHub Copilot7.

Managing devcontainers

The prerequisites to use devcontainers are Docker8 and the VSCode Remote - Containers extension9.

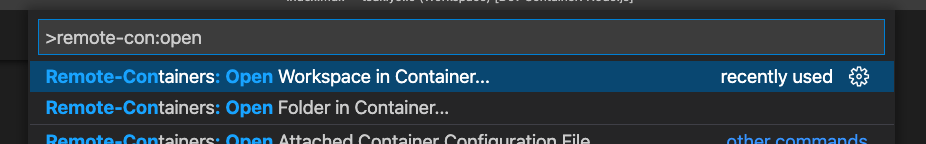

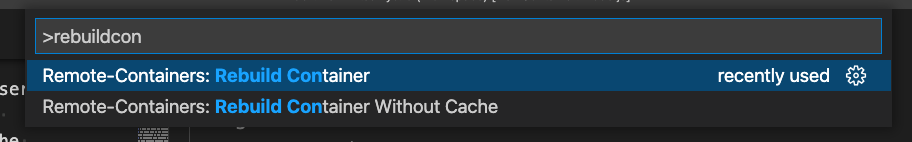

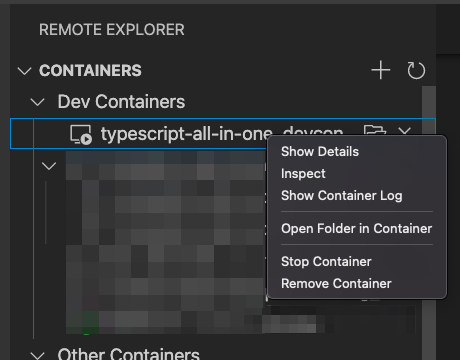

The extensions adds VSCode commands for managing a devcontainer. First, open the command palette in VSCode with CTRLSHIFTP.

Launch a devcontainer

In order to launch a devcontainer, look for the Remote-Containers: Open Folder in Container... to open a folder or Remote-Containers: Open Workspace in Container... to open a VSCode workspace10.

Rebuild a devcontainer

If you have made changes to the devcontainer and want them to be reflected, look for the Remote-Containers: Rebuild Container. This is also for getting out of sticky situations where the client is hanging - similar to the Developer: Reload Window command.

Remove a devcontainer

Containers are not removed from Docker between VSCode sessions. This allows us to save time by starting where we left off from a previous session. In some cases we need to purge the devcontainer. To do this, look for the View: Show Remote Explorer - this presents a list of all devcontainers. Right click on a container to reveal the option to remove it.

Advanced use cases

Multiple containers

docker-compose enables us to run and connect multiple containers with each other. A great use case is for running local databases to test against. These database containers can be removed and rebuilt without impacting the development environment.

version: '3.8'

services:

main:

build:

context: .

dockerfile: Dockerfile

volumes:

- ..:/workspace:cached

networks:

- default

depends_on:

- dynamodb

- pg

environment:

DYNAMODB_ENDPOINT: http://dynamodb:8000

PG_CONNECTION_STRING: postgres://postgres:postgres@pg:5432/postgres?sslmode=disable

command: sleep infinity

dynamodb:

image: amazon/dynamodb-local:latest

networks:

- default

pg:

image: postgres

networks:

- default

environment:

POSTGRES_PASSWORD: postgres

pgweb:

image: sosedoff/pgweb

ports:

- 5433:8081

networks:

- default

depends_on:

- pg

environment:

DATABASE_URL: postgres://postgres:postgres@pg:5432/postgres?sslmode=disable

networks:

default:

The above example runs a dynamodb and postgres instance on the same default network11 as the main service. This enables the main service to connect to them with the conveniently injected environment variables DYNAMODB_ENDPOINT and PG_CONNECTION_STRING. There is also a pgweb instance that is exposed to the host machine at port 5433 for conveniently inspecting the postgres database in a browser.

Forwarding ports

Often times we may need be developing a HTTP API and would like to ping it from the host machine. This is possible with forwarded ports[^devconatiner-forward-ports].

There are a few ways to do this but the most portable is to publish the port in the docker-compose.yml as it is not strictly tied to the devcontainer runtime. This simply involves adding the port field to the main service.

version: "3.8"

services:

main:

build:

context: .

dockerfile: Dockerfile

port:

- 8080:8080

volumes:

- ..:/workspace:cached

command: sleep infinity

Docker in Docker

Often times we need Docker to build and run containers as part of development. This can be complex are already developing inside a container with devcontainers.

The simplest way to get this is to use a devcontainer feature12, which are essentially scripts that run on the devcontainer. One of them being docker-in-docker13. This feature is enabled by including it in the feature list in the devcontainer.json file.

{

"name": "javascript-devcontainer",

"dockerComposeFile": "docker-compose.yml",

"service": "main",

"workspaceFolder": "/workspace",

"settings": {

"terminal.integrated.shell.*": "zsh"

},

"extensions": ["dbaeumer.vscode-eslint", "github.copilot"],

"features": {

"docker-in-docker": "latest"

}

}

The case against devcontainers

VSCode lockin

The elephant in the room is the dependency on VSCode. There is an open spec14 but no adoption by other vendors (i.e. JetBrains15). It is a difficult task to ask a dev to move editors - I've definitely been guilty of this after reluctantly working in projects that require IntelliJ.

Balance this with the value of reducing friction for a new contributor or passerby to get setup and make their first PR; runnable documentation of an ideal dev environment; and when our local environment has gone to shit we can always fallback. If you really don't like VSCode, you can always SSH into the container and use vi 😅 - in fact there is a devcontainer CLI16 for running outside a VSCode environemnt such as for CI pipelines.

Magic

There is a lot of magic, e.g. git config integration[^git-integraton], port forwarding17, etc. In most cases these work perfectly, but when things go wrong it can be difficult to find some help from Google as it is still a niche feature with a small community of users.

Performance

There are some known limitations with Docker on various operating systems especially relating to disk performance18.

Some19 have encountered extremely slow package management in NodeJS project with a large node_modules folder🙄. The issues seem to stem from VSCode bind mounting2021 the local workspace into the container, which can degrade performance22 as it attempts to maintain consistency between the host and container. Some solutions involve excluding the node_modules 23 or modifying the consistency level to prioritise the container24.

Conclusion

Devcontainers are a big step in improving developer experience in teams, especially for onboarding new contributors. It leverages Docker, which is a defacto industry standard, but the devcontainer runtime remains tightly integrated with VSCode, which could be a risk for some. Personally, I will be using it for all my personal projects as I love the portability it offers - enabling me to get instantly setup my projects on any computer with Docker and VSCode installed.

Footnotes

-

https://github.com/microsoft/vscode-dev-containers/blob/main/containers/javascript-node/history/0.201.4.md#variant-14-buster ↩

-

https://github.com/microsoft/vscode-dev-containers/blob/main/containers/docker-existing-docker-compose/.devcontainer/docker-compose.yml ↩

-

https://code.visualstudio.com/docs/remote/devcontainerjson-reference#_vs-code-specific-properties ↩

-

https://marketplace.visualstudio.com/items?itemName=dbaeumer.vscode-eslint ↩

-

https://marketplace.visualstudio.com/items?itemName=ms-vscode-remote.remote-containers ↩

-

https://docs.docker.com/compose/networking/#specify-custom-networks ↩

-

https://code.visualstudio.com/docs/remote/containers#_dev-container-features-preview ↩

-

https://github.com/microsoft/vscode-dev-containers/blob/main/script-library/docker-in-docker-debian.sh ↩

-

https://youtrack.jetbrains.com/issue/IDEA-226455?_ga=2.29462271.1723067794.1645532802-1430991228.1643156032 ↩

-

https://code.visualstudio.com/docs/remote/devcontainer-cli ↩

-

https://code.visualstudio.com/docs/remote/containers#_forwarding-or-publishing-a-port ↩

-

https://code.visualstudio.com/remote/advancedcontainers/improve-performance ↩

-

https://code.visualstudio.com/remote/advancedcontainers/change-default-source-mount ↩

-

https://burnedikt.com/dockerized-node-development-and-mounting-node-volumes/ ↩

-

https://blog.rocketinsights.com/speeding-up-docker-development-on-the-mac/#bind-mount-delegated ↩